There’s a joke people love repeating:

"AI is only as smart as the question you ask it."

It sounds clever. But after actually working with tools like ChatGPT or Claude for a while, you realise something more blunt:

Most bad AI results aren’t the AI’s fault. They’re lazy prompts.

Typing a one-line request and hoping for magic is like walking into a hardware store and saying:

"I need something."

You’ll get something. But not something useful.

The shift happens when you stop treating AI like a search engine — and start treating it like a junior collaborator who needs direction.

That’s where structured prompting comes in.

In this guide, I’ll break down the 6-part anatomy of a high-performance prompt — the same mental model experienced users rely on — and show you how to turn vague requests into outputs you can actually use.

First — What a Prompt Actually Is (Beyond the Definition)

Yes, technically a prompt is just “what you type into an AI.”

But that definition is too shallow to be useful.

A better way to think about it:

A prompt is a briefing document.

You’re not just asking a question. You’re setting expectations, constraints, and context — all at once.

If you’ve ever worked with a designer, developer, or writer, you already know this:

- A vague brief = disappointing work

- A clear brief = surprisingly good work

AI behaves exactly the same way.

Why Beginners Get Mediocre Results (Even With “Good” Tools)

After working with different models and helping people refine prompts, the same patterns show up every time:

1. They under-specify the task

“Help me grow my business” is not a task. It’s a wish.

2. They assume the AI understands context

It doesn’t. Not your market, not your constraints, not your audience.

3. They ignore format

If you don’t define structure, you’ll get a wall of text 90% of the time.

And here’s the uncomfortable truth:

Even a powerful model will give average output if your prompt is average.

This isn’t just opinion — guidance from Google Cloud on prompt engineering consistently emphasizes that clear, structured prompts directly improve output quality and relevance.

That’s not a limitation — it’s leverage.

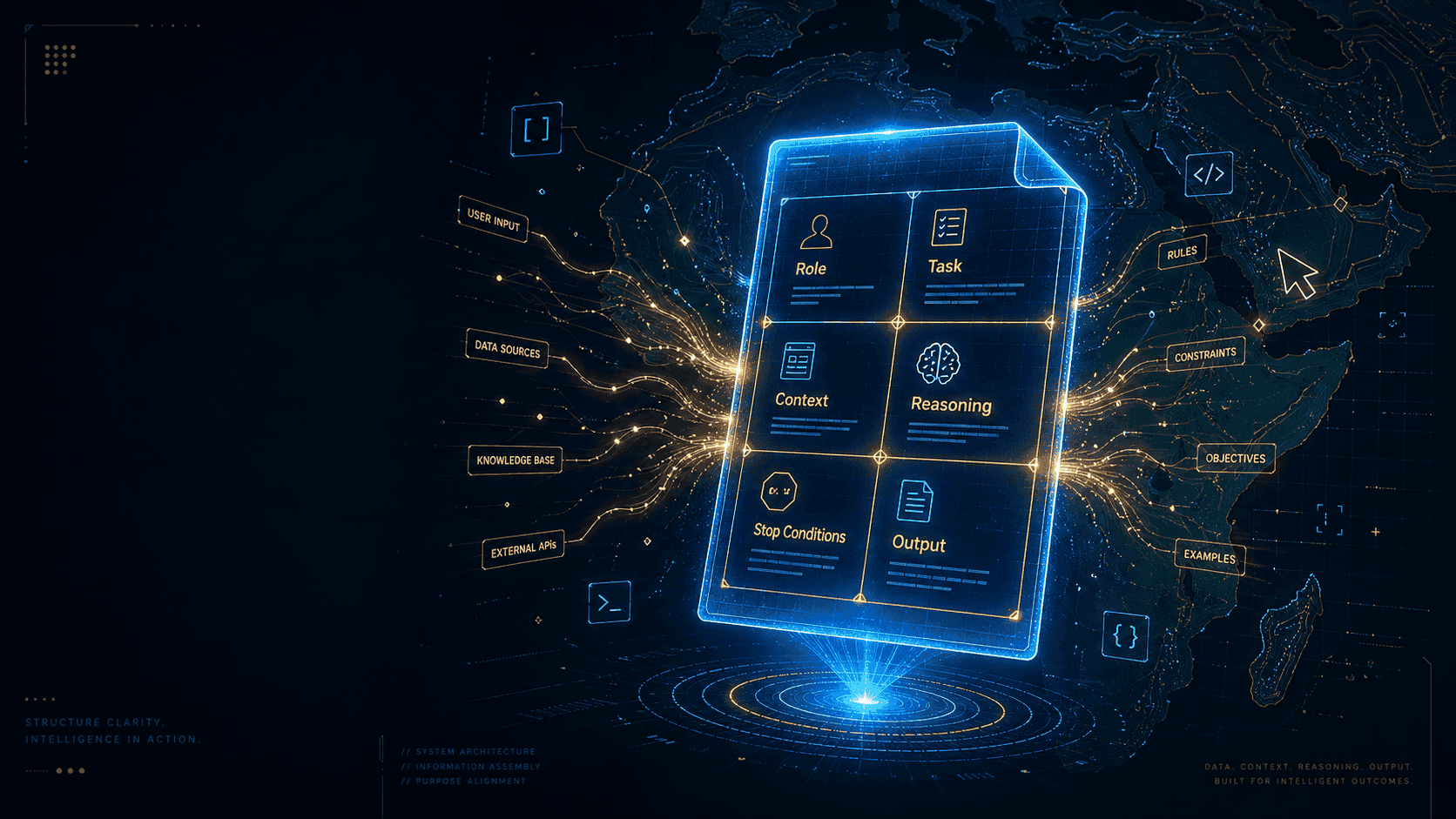

The 6-Part Anatomy of a High-Performance Prompt

This isn’t theory. It’s a practical framework used by people who rely on AI daily.

| Part | What it does |

|---|---|

| Role | Defines how the AI should think |

| Task | Defines what needs to be done |

| Context | Defines the situation |

| Reasoning | Defines the goal behind the task |

| Stop Conditions | Defines what “complete” means |

| Output | Defines how results should be delivered |

You don’t always need all six. But when a prompt really matters — you’ll feel the difference.

1. Role — Stop Asking AI to Be “Generic”

Most people skip this. That’s a mistake.

When you don’t assign a role, the AI defaults to something safe and generic. That’s why so many outputs sound like bland blog posts.

Instead, anchor it:

“Act as a digital marketing strategist who has worked with early-stage brands in East Africa.”

That single line changes:

- vocabulary

- depth

- assumptions

- examples

And more importantly — it removes generic advice.

Research summarized by platforms like Learn Prompting shows that role prompting significantly improves performance in writing, reasoning, and instruction-based tasks.

Weak:

“Act as an expert”

Better:

“Act as a startup advisor focused on lean growth for small African businesses”

Specificity is what makes this work.

2. Task — Clarity Beats Cleverness Every Time

This is where most prompts fail quietly.

Compare:

❌ “Help me with a launch plan”

vs

✅ “Create a 60-day launch plan for a mobile-first savings app targeting university students in Uganda. Include weekly actions, onboarding strategy, and retention tactics.”

The second one works because it removes guesswork.

When writing tasks, always include:

- what you want

- who it’s for

- scope (timeframe, size, or depth)

- key components

If the AI has to “figure it out,” you’ve already lost precision.

3. Context — This Is Where the Magic Actually Happens

This is the most underrated part of prompting.

Context is what turns generic output into something that feels custom-built.

Think in terms of constraints:

- budget

- audience behavior

- platform (WhatsApp vs website matters a lot)

- team size

- local realities

Example:

“I run a small tailoring business in Kampala with 2 employees. Most customers come from Instagram. My problem is low repeat purchases.”

That one paragraph will outperform ten generic prompts.

Why?

Because now the AI is solving your problem — not a hypothetical one.

4. Reasoning — Tell It What Success Looks Like

This is where experienced users quietly outperform everyone else.

Most people describe the task. Very few explain the intent behind it.

That’s a missed opportunity.

Add one simple line:

- “The goal is…”

- “This matters because…”

- “I want this to…”

Example:

“The goal is to build trust and convert first-time buyers without relying on paid ads.”

This aligns the output with your real objective.

This idea is closely related to research from Google Research on chain-of-thought prompting, which shows that guiding reasoning leads to significantly better results on complex tasks.

5. Stop Conditions — The Difference Between “Okay” and “Done”

Without constraints, AI stops early.

It gives you something that looks complete — but isn’t.

Stop conditions fix that.

Examples:

- “Include at least 3 actionable ideas per week”

- “Add estimated costs in local currency”

- “End with a decision checklist”

This forces completeness.

Think of it as quality control built into the prompt.

6. Output — Don’t Fix Formatting Yourself

This is where you save time.

If you don’t specify format, you’ll spend time restructuring the answer anyway.

Be explicit:

- table vs paragraph

- bullet points vs narrative

- tone (formal, casual, WhatsApp-style)

- structure (columns, sections, headings)

Example:

“Present as a table with columns: Week, Action, Goal, Expected Result.”

Now it’s usable immediately.

Putting It All Together (Real Example)

Weak version:

“Help me market my skincare business”

Structured version:

Role: Act as a digital marketing strategist experienced in African D2C beauty brands.

Task: Create a 60-day social media launch strategy for a natural skincare brand targeting Kenyan women aged 20–35.

Context: Brand name is “Asili Beauty.” Budget is limited (KES 15,000/month). Sales happen via Instagram and WhatsApp. Current audience is small (200 followers).

Reasoning: The goal is to build trust quickly and convert the first 20 paying customers without relying on paid ads.

Stop Conditions: Include weekly content ideas, at least 3 organic influencer strategies, and a launch checklist.

Output: Present as a weekly table with columns: Week, Content Theme, Post Ideas, Engagement Action, Expected Outcome.

That’s not just a better prompt.

It’s a usable system.

Practical Advice (From Actually Using This Daily)

Save prompts that work

Build your own library.

Iterate instead of restarting

Refine — don’t reset.

Start small, then expand

Use all six parts when it matters.

Treat AI like a new hire

Capable, fast, but needs direction.

The Framework (Quick Reference)

| # | Part | Summary |

|---|---|---|

| 1 | Role | Who the AI should act as |

| 2 | Task | What needs to be done |

| 3 | Context | Background information |

| 4 | Reasoning | Why it matters |

| 5 | Stop Conditions | What “done” means |

| 6 | Output | How results should look |

Final Thought

Better prompts don’t just improve answers — they reduce editing, rewriting, and frustration.

That’s the real win.

Use the framework once on a real task — and you’ll immediately feel the difference.